Prompt Smarter, Not Harder: The Magic of Meta-Prompting Explained

Let AI optimize your prompts—saving you time, effort, and headaches.

Introduction

As LLMs become a central part of our everyday work, writing good prompts has become a crucial skill. The field is evolving super fast, and we're constantly discovering smarter ways to interact with these models.

When LLMs first started going mainstream, people quickly realized there were clever tricks to get them to follow instructions better. This skill was soon called prompt engineering. More formally: This art of refining prompts is termed prompt engineering, which “involves selecting the right words, phrases, symbols, and formats” to get the best possible result from AI models (Johnmaeda, 2023).

Common Prompting Techniques

Imagine you want to improve your prompt. Typically, you'd use one (or more) of these popular techniques:

Zero shot prompting

Definition: Give clear instructions without providing any examples.

Example:

Summarize this article in 5 bullet points.

Few shot prompting

Definition: Provide a few examples alongside your instruction, helping the LLM understand exactly what you want.

Example:

Here are 2 example summaries:

<<<example 1>>

<<example 2>>

Write a third in the same style.

Role-Based Prompt

Definition: Assign a specific role or persona to the LLM, shaping its style and tone.

Example:

You are an expert summarizer well known for providing crisp summaries, which are simple to read and understand. Write summary for the following:

There are many more techniques, and the list keeps expanding. But here's the catch: keeping track of each new method and constantly rewriting prompts can become overwhelming. Wouldn't it be great if we could automate some of this? That's exactly where meta-prompting comes in.

What is Meta prompting?

Meta-prompting is a technique where you use an LLM to generate or improve prompts. Yep, you heard that right—prompting an LLM to write a better prompt for another LLM. (Feels like Inception, doesn't it?)

It’s a process of using prompts to guide, structure, and optimize other prompts, helping ensure they’re more effective in guiding the LLM towards high-quality, relevant outputs.

Example of Meta-Prompting in Action

Here's how meta-prompting typically works in practice:

Start with a simple prompt.

Use a powerful LLM to improve and optimize it.

Feed that improved prompt into a more cost-effective LLM to get better results.

Too many words..let's take a practical example from OpenAI’s cookbook.

Here is simple prompt:

Summarize this news article: {article}

Now, let's use meta-prompting to generate a better prompt.

Here is prompt for meta-prompt:

Improve the following prompt to generate a more detailed summary.

Adhere to prompt engineering best practices.

Make sure the structure is clear and intuitive and contains the type of news, tags and sentiment analysis.

{simple_prompt}

Only return the prompt.

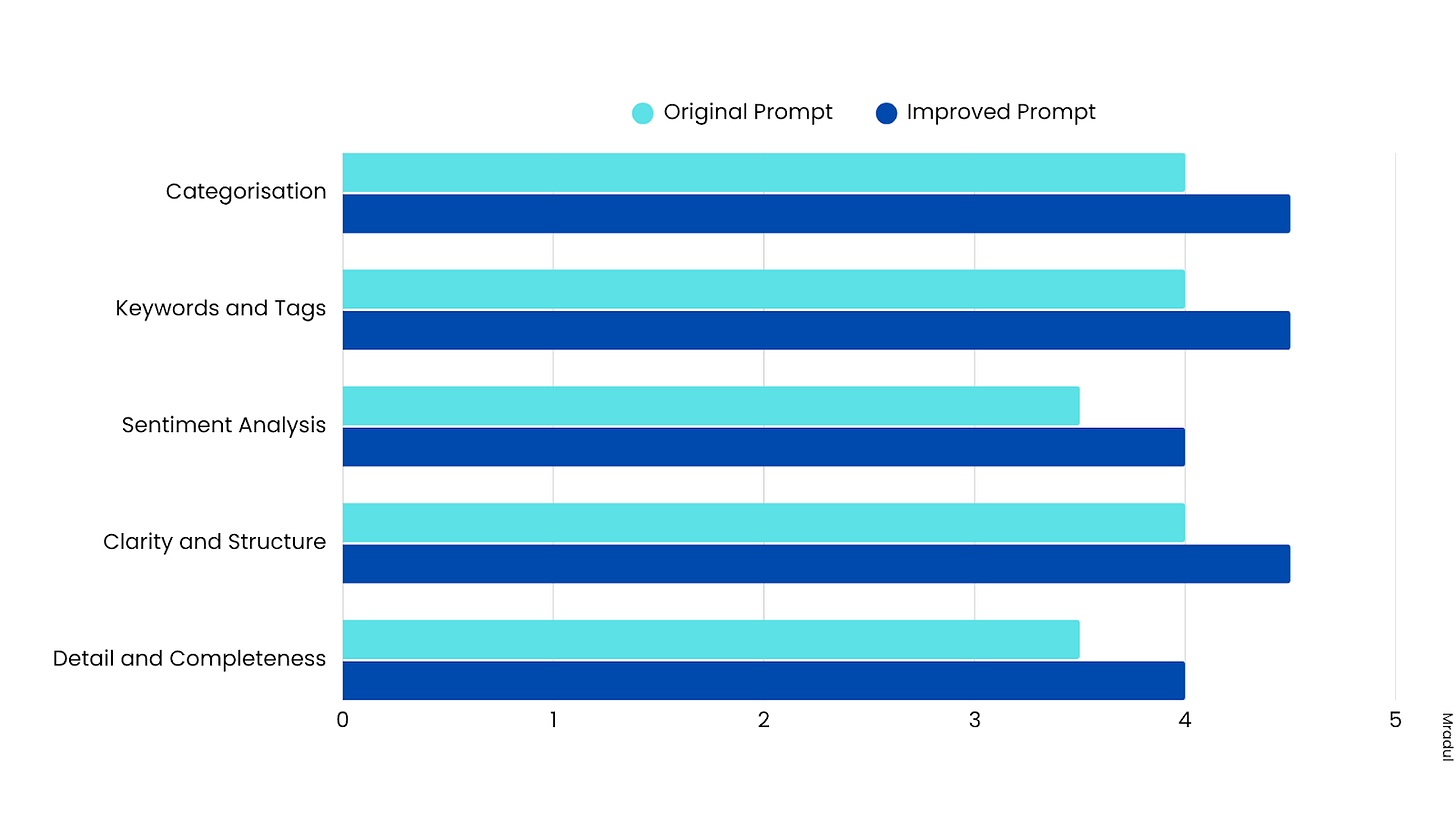

Here is the improved prompt returned by the meta-prompting process:

Please read the following news article and provide a comprehensive summary that includes:

1. **Type of News**: Specify the category of the news article (e.g., Politics, Technology, Health, Sports, etc.).

2. **Summary**: Write a concise and clear summary of the main points, ensuring the structure is logical and intuitive.

3. **Tags**: List relevant keywords or tags associated with the article.

4. **Sentiment Analysis**: Analyze the overall sentiment of the article (positive, negative, or neutral) and briefly explain your reasoning.

**Article:**

{article}

What about the results?

Clever, right?

Real-World Application: OpenAI's Meta Prompting

You might be wondering, "Is anyone actually using this?"

Absolutely! OpenAI—the company that popularized LLMs—is already leveraging meta-prompting.

Following is the Meta prompt OpenAI uses:

Given a task description or existing prompt, produce a detailed system prompt to guide a language model in completing the task effectively.

# Guidelines

- Understand the Task: Grasp the main objective, goals, requirements, constraints, and expected output.

- Minimal Changes: If an existing prompt is provided, improve it only if it's simple. For complex prompts, enhance clarity and add missing elements without altering the original structure.

- Reasoning Before Conclusions**: Encourage reasoning steps before any conclusions are reached. ATTENTION! If the user provides examples where the reasoning happens afterward, REVERSE the order! NEVER START EXAMPLES WITH CONCLUSIONS!

- Reasoning Order: Call out reasoning portions of the prompt and conclusion parts (specific fields by name). For each, determine the ORDER in which this is done, and whether it needs to be reversed.

- Conclusion, classifications, or results should ALWAYS appear last.

- Examples: Include high-quality examples if helpful, using placeholders [in brackets] for complex elements.

- What kinds of examples may need to be included, how many, and whether they are complex enough to benefit from placeholders.

- Clarity and Conciseness: Use clear, specific language. Avoid unnecessary instructions or bland statements.

- Formatting: Use markdown features for readability. DO NOT USE ``` CODE BLOCKS UNLESS SPECIFICALLY REQUESTED.

- Preserve User Content: If the input task or prompt includes extensive guidelines or examples, preserve them entirely, or as closely as possible. If they are vague, consider breaking down into sub-steps. Keep any details, guidelines, examples, variables, or placeholders provided by the user.

- Constants: DO include constants in the prompt, as they are not susceptible to prompt injection. Such as guides, rubrics, and examples.

- Output Format: Explicitly the most appropriate output format, in detail. This should include length and syntax (e.g. short sentence, paragraph, JSON, etc.)

- For tasks outputting well-defined or structured data (classification, JSON, etc.) bias toward outputting a JSON.

- JSON should never be wrapped in code blocks (```) unless explicitly requested.

The final prompt you output should adhere to the following structure below. Do not include any additional commentary, only output the completed system prompt. SPECIFICALLY, do not include any additional messages at the start or end of the prompt. (e.g. no "---")

[Concise instruction describing the task - this should be the first line in the prompt, no section header]

[Additional details as needed.]

[Optional sections with headings or bullet points for detailed steps.]

# Steps [optional]

[optional: a detailed breakdown of the steps necessary to accomplish the task]

# Output Format

[Specifically call out how the output should be formatted, be it response length, structure e.g. JSON, markdown, etc]

# Examples [optional]

[Optional: 1-3 well-defined examples with placeholders if necessary. Clearly mark where examples start and end, and what the input and output are. User placeholders as necessary.]

[If the examples are shorter than what a realistic example is expected to be, make a reference with () explaining how real examples should be longer / shorter / different. AND USE PLACEHOLDERS! ]

# Notes [optional]

[optional: edge cases, details, and an area to call or repeat out specific important considerations]

Practical Implementation

Now that we've seen how cool this is, how can you actually put meta-prompting into practice?

Individual use:

Struggling to write a great prompt? Just write down your basic instructions, feed them into a meta-prompt process, and let the LLM do the optimization for you. No more headaches trying to find the perfect wording yourself!

In application:

If you're building an app or service that uses LLMs, meta-prompting can help automatically refine your prompts.

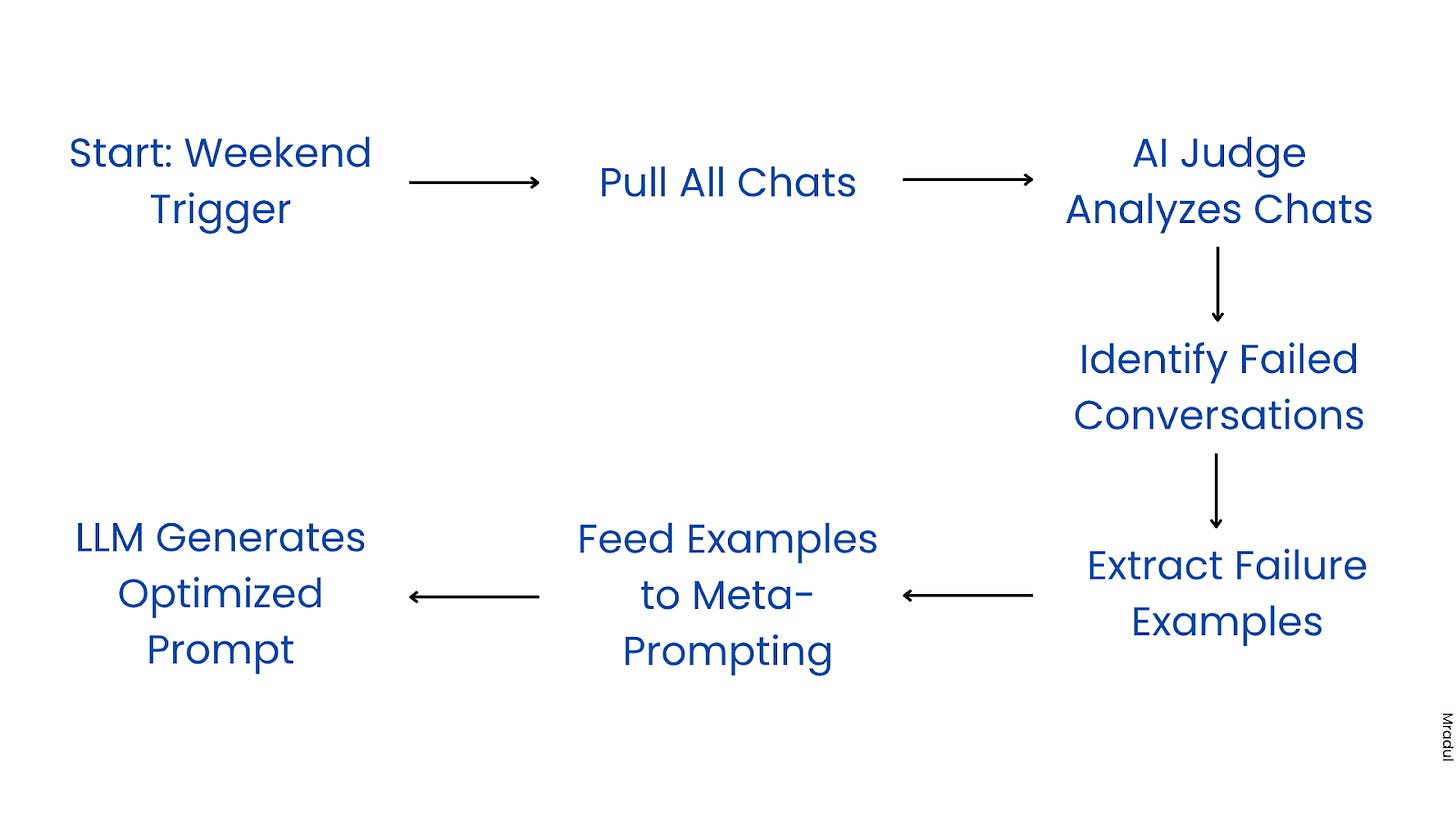

Lets take an example of a customer service chatbot. The prompt adheres to instructions laid out, but users are unpredictable. So every weekend, the development team looks at the chat logs, identifies the chats which didn’t go as planned and then they improve the prompt.

Here is how the pipeline could look like with meta-prompt:

Every weekend, all the chats are pulled.

An AI judge (LLM call/agent) can identify the conversations that went wrong and judge what went wrong.

All of these examples are then fed for meta prompting.

The LLM now gives us an optimized prompt that handles the cases where the bot faced challenges earlier.

Bonus:

To ensure your prompts consistently produce reliable results, consider using prompt testing—think of it as unit testing, but specifically designed for prompts. Learn more about prompt testing here.

Integrating prompt testing into your pipeline allows you to systematically evaluate new prompts and confidently decide whether they're ready to deploy. For even greater automation, you can feed challenging chat examples directly into your prompt tests. Any failing tests can then be automatically sent to the meta-prompting process for further optimization.

Wrapping Up

In short, meta-prompting is a smart, practical, and automated way to continuously improve your prompts. It saves you time, effort, and resources, making your interactions with LLMs smoother and more efficient.

Give it a try—you might be surprised how much easier your LLM workflows become!

Been using meta prompting for a weeks now. Found it so helpful when you’re tweaking prompts all day. If AI is part of your daily grind, definitely give it a try.

Thanks for sharing such practical, effective tips!

Great post! I’ve actually tried out a few of these prompt engineering techniques, and they’ve made a real difference, especially meta prompting. These techniques are simple but game‑changing thanks for sharing!